The AI industry is racing to perfect conversation. We think it’s optimising the wrong thing. This campaign explores a different frontier: AI that acts without being asked — and the design challenge that creates.

At a recent technology show, among the humanoids and robot dogs and the inevitable wall of smart screens, something caught our attention. A small companion robot built not for humans but for their pets. It followed a dog around the house, read its emotional state through behaviour and sound analysis, dispensed treats, initiated play. All autonomously. Without a single instruction.

Our first reaction was what most people’s would be: peak tech-show novelty. AI plus cute animals plus slightly strange industrial design.

But the more we watched, the more a different question formed. This robot had to observe behaviour it couldn’t ask about. Interpret context from a user who couldn’t explain what they needed. Make judgment calls about when to act and when to hold back — all without conversation, without confirmation, without a wake word or a prompt.

In a world where conversational AI dominates the design agenda, perhaps we’re building for the wrong interface entirely.

Smart speakers were supposed to change how we live with technology. Instead, they revealed a fundamental flaw in the premise. Conversation puts the work on the human. You have to remember what to ask, when to ask it, how to phrase it. You have to initiate every interaction. The intelligence is real.

The agency remains yours. Smart speakers didn't fail because they couldn't understand speech. They failed because they made humans do all the work.

The products winning in physical spaces increasingly do so through presence and autonomous judgment, not conversational sophistication. They notice. They act. They handle what you'd have missed or not had the bandwidth to address. They make life easier not by answering questions, but by not requiring you to ask them.

The real opportunity isn't building AI that talks better. It's building AI that notices more.

To explore what AI agency in physical spaces could actually look like, for people, for products, and for the design decisions that sit between them, we developed three scenarios. These are not product proposals. They are deliberate provocations: explorations of a possibility space designed to surface the questions that need answering before the products get built.

Each raises a genuine tension that design cannot eliminate, only navigate. We're not suggesting these are the right answers. We're asking whether they're the right questions.

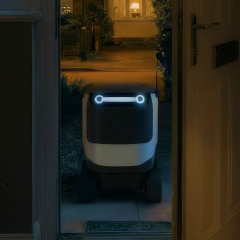

Mimi has been working late all week, skipping meals. Her AI notices and acts without being asked. This scenario explores financial agency in service of care: what it means for an AI to spend on your behalf, and whether that feels like support or intrusion. An AI that can act with real consequences, financial, logistical, personal, requires a level of trust that most products haven't been designed to earn. The question isn't whether the technology can do this. It's whether the design can make it feel like care rather than overreach.

Yuki is anxious downstairs while her owner is on a call. The AI reads the behavioural cues, opens the garden door, and resolves what the owner couldn't see. This scenario explores emotional interpretation: translating invisible states into visible care. Should AI act on an emotional state without confirmation? When does noticing become surveillance? The line between a product that's helpfully attentive and one that's uncomfortably watchful is not drawn in the technology. It's drawn in the design, in how the product makes its awareness felt, and how much control it returns to the human.

Oscar wants to stay in bed. His AI opens the curtains anyway, not forcing him outside, but revealing what's waiting. When he closes them, it opens them again. This scenario explores the edge of AI advocacy: the difference between an AI that supports your intentions and one that gently challenges them. Who defines what you should notice? Where is the line between encouragement and pressure? These tensions can't be designed away. They can only be designed for, made navigable, transparent, and respectful of individual boundaries.

These three scenarios point toward something we believe will define what comes next: the shift from AI that responds to AI that acts.

That shift has profound implications for how products are designed. Autonomous action requires trust infrastructure that most current products haven't been built to establish. Users need to understand what an AI is noticing, believe it's noticing the right things, and feel genuinely in control of what it does next, even when they're not actively directing it. Building that relationship through physical design, rather than assuming it arrives with the capability, is the central challenge of ambient intelligence.

The companies that get this right won't be those who ship AI agency fastest. They'll be those who prove genuine value and reliable judgment before asking users to delegate. That validation, earned through design that makes autonomous behaviour feel trustworthy rather than opaque, is something competitors cannot replicate quickly.

The goal isn't predicting the right future. It's designing the conditions that let us choose which future to pursue.

This series is part of Studio ISO's ongoing exploration of AI in physical spaces. If you're working on products in this territory and want to think through the design challenges, get in touch.